Quality Control in Industry with CV and TinyML

Quality control on production lines with computer vision and TinyML involves using computer vision algorithms and TinyML technology to automatically inspect and detect defects in products as they move along the production line

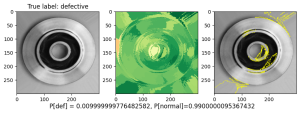

For example, if the model predicts that the given image is deficient: green parts were predicted as deficient, and the red ones as normal.

The third figure shows the area of the figure that mostly contributed to the predicted class

Asset Description

Computer Vision (CV) can be used to analyze images of products and compare them to a "good" or "reference" image to identify any defects. These algorithms can be trained to detect a wide range of defects, including scratches, dents, misalignments, and missing components. Additionally, Computer Vision algorithms are able to work in real-time, allowing it to detect defects in products as they move along the production line and flag them for further inspection or rejection.

TinyML is a field that involves developing machine learning models that can run on small, resource-constrained devices such as microcontrollers. In the context of quality control on production lines, TinyML can be used to enable the CV algorithms to run on embedded devices, such as cameras or sensors, that are integrated into the production line. This allows the system to process images and make decisions about defects without the need to send data to a separate computer for analysis.

By combining computer vision algorithms and TinyML technology, it's possible to create a real-time, automated quality control system that can detect defects in products as they are produced, improving the overall quality and efficiency of the production process.

Features

This asset includes a Jupyter notebook that presents a complete pipeline for:

| Feature | Description |

|---|---|

| Exploratory Data Analytics (EDA) on image data | Pandas Dataframe, exporing and visualize the datasets and its metrics. |

| Data preparation and augmentation | Scale the values down to a smaller range, usually between 0 and 1. We use the tf.keras.layers.Rescaling layer in TensorFlow to accomplish this standardization and adjust the channel values of each image to be in the [0, 1] range. Data augmentation increases the diversity of the training set by applying random (but realistic) transformations, such as image rotation. |

| Image classification with TensorFlow | with Deep learning (CNN) models |

| Models evaluation | Confusion Matrix |

| Model interpretation predictions | with LIME |

| Transformation for use in embedded devices | transformation to TFLite format |

| Post-training quantization | Post-training quantization is a static quantization technique where the quantization is applied to the already trained model. This method can be applied to both weights and activations, or just the weights. |

| Quantization aware training | Quantization aware training (QAT) involves training a model with knowledge of the quantization process that will be used to reduce the precision of the model's weights and activations during deployment. |

| Overall evaluation | Evaluation based on metrics such as Accuracy, Recall, Precision, F1-Score, Inference Time and Size. |

| Real-time usage of the developed models | with a python script opencv_object_tracking.py |

Usage

This Notebook assumes that there is available a dataset of labeled images of a product and demonstrates how the user can perform the following:

- Dataset overview

- Data preparation and augmentation

- CV model creation for image classification

- Model transformation to TinyML using TfLite

- Comparison between the original and TfLite mode.

The asset is Available (Note: For demonstration purposes, we use the casting product image data for quality inspection dataset available at Kaggle. However, a similar logic could be applied to other industries and product lines.)

Walkthroughs

- Instructions available here

- Detailed step-by-step demontration of the Quality Control asset can be found here

Licence

MIT License, licence link here

Resources

- Notebook available in Github

- Created by ExpertAI-Lux S.à r.l

- Contact george.fatouros@expertai-lux.com / george.makridis@expertai-lux.com

Acknowledgement

This work is an update of ExpertAI-Lux S.à r.l work in the context of the H2020 AI REGIO project (Grant Agreement No 952003). Further updates performed in the context of the AI REDGIO 5.0 project, concern; (1) leveraging tensorflow lite with the aim of being able to deploy on an edge device, and (2) post-training quantization with the aim of making the model more resource-efficient.