AI REDGIO 5.0 Open Hardware Platform

The AI REDGIO 5.0 Open Hardware Platform

Asset Description

The Open Hardware Platform for Embedded Artificial Intelligence and AI-at-the-Edge represents a significant advancement in technology and artificial intelligence (AI). This platform is based on open hardware, which allows users and developers to modify and enhance hardware according to their specific needs. The Open Hardware Platform for Embedded AI and AI-at-the-Edge have a wide range of applications; it can be used in autonomous drones for image processing and real-time decision-making, in personal assistance devices for voice recognition and real-time interaction, or industrial sensors for monitoring and independent decision making.

Open Hardware System and Software Specifications

Open Hardware Specifications

ESP32-Wrover-B contains two low-power Xtensa® 32-bit LX6 microprocessors. The internal memory includes:

- 448 KB of ROM for booting and core functions.

- 520 KB of on-chip SRAM for data and instructions.

- 8 KB of SRAM in RTC, which is called RTC FAST Memory and can be used for data storage; it is accessed by the main CPU during RTC Boot from the Deep-sleep mode.

- 8 KB of SRAM in RTC, which is called RTC SLOW Memory and can be accessed by the co-processor during the Deep-sleep mode.

- 1 Kbit of eFuse: 256 bits are used for the system (MAC address and chip configuration) and the remaining768 bits are reserved for customer applications, including flash-encryption and chip-ID.

Software Requirements

Deploying an artificial intelligence model on an ESP32 requires a combination of specific tools and libraries, both for model development and hardware implementation. The main software requirements are detailed below:

- Development Environment:

- Arduino IDE or PlatformIO: Both are popular development environments for programming the ESP32. Arduino IDE is more beginner friendly, while PlatformIO offers more advanced features.

- AI Libraries and Tools:

- TensorFlow Lite for Microcontrollers: This is a version of TensorFlow designed for low-power devices such as the ESP32. It allows you to convert TensorFlow models into formats that can be run on microcontrollers.

- ESP32 TensorFlow Lite Arduino Library: A library that facilitates the integration of TensorFlow Lite models into Arduino projects for the ESP32.

- ESP32 Drivers and Libraries:

- ESP32 Board Manager and ESP32 Libraries: These are needed to program and communicate with the ESP32 from the Arduino IDE.

- PubSubClient (optional): If you plan to integrate the ESP32 with MQTT for IoT communications.

- Modelling and Training Tools:

- TensorFlow: The primary tool for designing, training and converting AI models for use on microcontrollers.

- Python: TensorFlow and many other AI-related tools are based on Python, so it is essential to have a proper installation of Python and pip (Python package manager).

- Conversion Tools:

- TensorFlow Lite Converter: once the AI model has been trained with TensorFlow, it needs to be converted to a format that is compatible with TensorFlow Lite for Microcontrollers.

- Additional Dependencies (depending on the project):

- Libraries for specific sensors or actuators if they are involved in the project (e.g. temperature sensors, cameras, motors, etc.).

- Communication libraries if specific connectivity is required (e.g. Wi-Fi, Bluetooth, LoRa, etc.).

- Debugging and monitoring tools:

- Serial Monitor: Included in the Arduino IDE and PlatformIO, it allows to monitor the program output in real time, which is essential for debugging.

AI at the Edge with the AI REDGIO 5.0 Open Hardware Platform: Proof-of-Concept

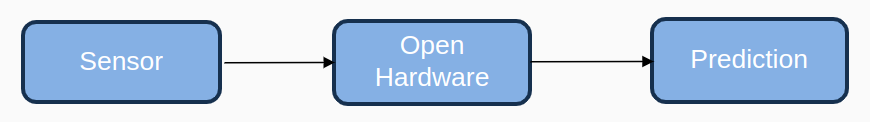

The main proof of concept to validate the progress of this task has been performed through the successful integration of a rudimentary artificial intelligence (AI) model on the Open Hardware Platform, with the ESP32 development board as the basis. This example is a proof of concept and is an example of developing AI models for manufacturing by using an artificial intelligence model to make air quality predictions on the data that a sensor would send via MQTT, as shown in the following Figure:

To achieve this, the first step is to generate an artificial intelligence model that is capable of making the necessary predictions. In the context of the current example, the model used is an AI model designed and developed as a tailor-made tool to predict air quality parameters. Going deeper, its capabilities extend to predicting the concentration of ozone (O3) that could be observed in the next hour. This prediction is based on a comprehensive analysis of reported values of particulate matter such as PM10 and PM2.5, gases such as nitrogen dioxide (NO2), sulphur dioxide (SO2), ozone (O3) and carbon monoxide (CO). Once the phases of data preparation, model training and model generation and optimisation have been passed, the last two steps, generate the Open Hardware code, compilation and finally the deployment on hardware, are still to be tested on real Open Hardware.

AI on Open Hardware Deployment Steps

In order to be able to deploy AI models within Open Hardware, two phases have to be distinguished:

- Phase I: Model generation and translation into code that can run on Open Hardware

- Phase II: Implementation of the code in the Open Hardware and programming of the rest of the functionality

Phase I: Model generation and translation

The following steps are part of Phase I of preparing the AI model for deployment on the Open Hardware.

- First, the code of the artificial intelligence model is generated in Python using the TensorFlow library, training the model and performing the necessary tests to obtain a satisfactory result

- The next step is to convert the model to TensorFlow Lite. To do this, it is only necessary to make a call to a function.

- The last step of this phase is to convert the model to C; for this, the Unix command is used:

| xxd -i input_file.tflite > output_file.cc |

The output of the command will be a C array similar to this one:

unsigned char converted_model_tflite[] =

{

0x18, 0x00, 0x00, 0x00, 0x00, 0x54, 0x46, 0x4c, 0x33, 0x00, 0x00, 0x00, 0x0e, 0x00.

<Lines omitted>

};

unsigned int converted_model_tflite_len = 18200

Phase II: Implementation of the code in the Open Hardware

The following connectivity, model loading and execution steps are required by the Open Hardware Platform users in order to deploy their AI models on the platform.

1. Network Connection and Data Reception

The ESP32 board, known for its versatility, has been configured to establish a connection to a Wi-Fi network thanks to its capabilities as a formidable Internet of Things (IoT) device.

In our quest for seamless data reception, we have incorporated the PubSubClient library. This library is critical to the board's ability to receive data via the MQTT protocol, which is renowned for its lightness and efficiency, especially in low-power devices. However, our team is also exploring the potential of a REST API, which could pave the way for receiving data via a more conventional HTTP network protocol and could facilitate its implementation within other frameworks on the Edge.

2. Model Loading and Prediction

The next phase is to take advantage of the TensorFlowLite ESP32 library , a feature available in the Arduino Integrated Development Environment (IDE). This library plays a crucial role in the initialisation of the essential variables to load the AI model on the board and guarantee its perfect execution, obtaining the same output that would be obtained by running the model on a more powerful computer.

3. Compilation and Code Uploading to the Development Board

The culmination of any development process is the deployment of the code onto the target device. After meticulous configurations and ensuring that every aspect of the code aligns with the example requirements, the next step is the compilation. This is where the raw code is transformed into a format that the development board can understand and execute. The Arduino IDE, known for its user-friendly interface, simplifies this process. Instead of navigating through complex command lines or scripts, the IDE provides a straightforward "Upload" button. A single click on this button initiates the process, transferring the compiled code onto the development board.

4. Execution

With the code securely in place, the board transitions from a dormant state to an active one, eager to perform its tasks. In this context, the board is designed to receive data via MQTT, a lightweight messaging protocol optimised for low-bandwidth, high-latency networks.

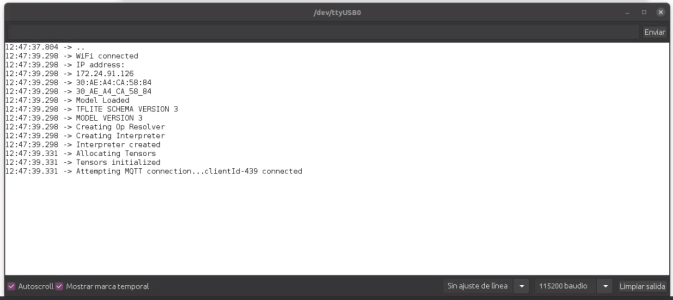

To monitor the board's activities and ensure its functioning as expected, the Arduino IDE provides another invaluable tool: the Serial Monitor, shown in the following Figure. This tool provides a window into the board's operations, displaying real-time data and messages time. Opening the Serial Monitor allows one to observe the data the board processes, ensuring its accuracy and timeliness.

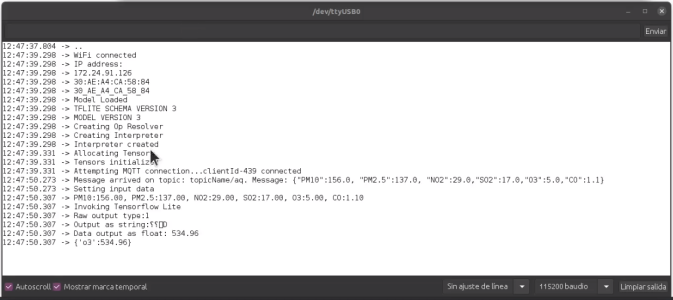

To activate the AI model embedded in the board, one does not need to go through complex procedures to activate the AI model embedded in the board. A simple MQTT message, sent to the topic to which the board is subscribed, is sufficient. The next Figure shows a message used during this proof of concept.

Once the board receives this message, it triggers the AI model, which processes the data and performs its predictive functions. This seamless integration of hardware and software, combined with user-friendly tools, ensures that even complex tasks like AI predictions become accessible and straightforward. The Prediction of the AI Model can be seen in the following Figure.

*Note: Importance of Version Consistency

A fundamental aspect that cannot be overlooked is the version consistency of TensorFlow. The version used during the training and validation phase of the AI model must reflect the version used during its deployment on the ESP32 board. If the Arduino IDE's TensorFlow Lite library for ESP32 is chosen, it is essential to use TensorFlow version 2.1.1. Any deviation from this could lead to discrepancies, manifesting as errors during model loading or subsequent execution.

External Resources

- Examples and guide for model onboarding on The Open Platform available in Github

- Created by Libelium (HOPU)